How DPL Built an AI-Ready, Experiment-Driven Workforce in Just 2 Months

From AI Interest to Measurable Execution, In Eight Weeks

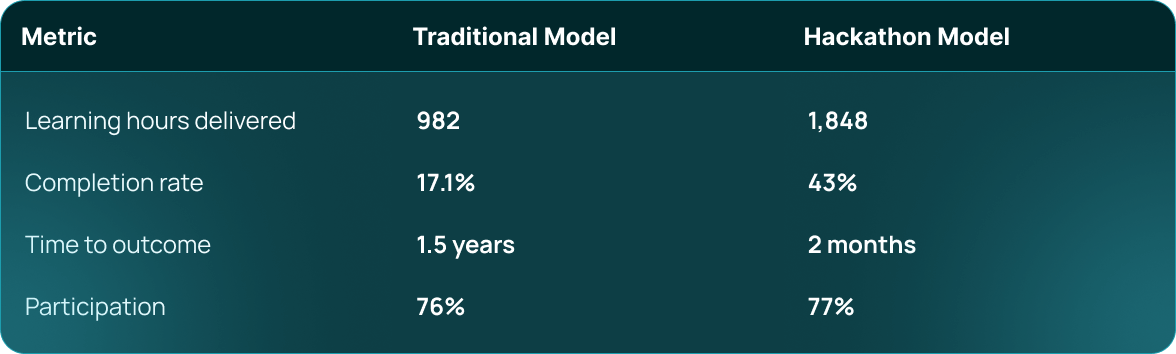

- 2× effective learning hours

- 43% completion rate (up from 17%)

- Deep engagement across technical and non-technical teams

- AI learning converted into working solutions

- Clear visibility into emerging talent and leadership

- Immediate operational automation beyond the hackathon

It was an intentional reset of how learning converts into performance.

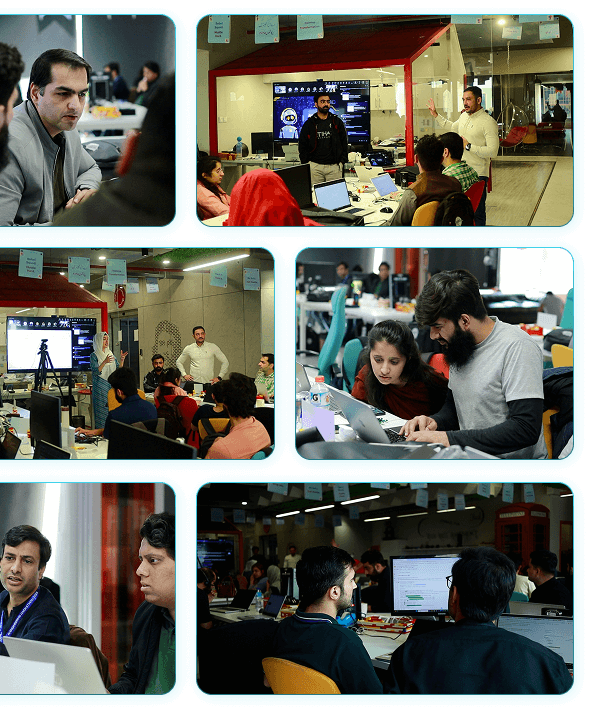

DPL Hackathon in Action

The difference wasn't forceful check-ins. It was the structure, gamification-based weekly challenges, and FOMO designed intentionally

Why DPL Took Action?

Before this engagement, DPL was facing a familiar enterprise reality:

- AI interest existed, but the capability was unknown

- Learning programs were slow, optional, and hard to measure

- Non-technical teams felt excluded from AI adoption

- Leadership lacked a clear signal of who was actually building skills

Traditional growth plans are optimized for time spent, not value created.

What DPL needed was not more learning, but learning that converts into action

What Future At Work Designed

Learning Before Pressure. Proof Under Constraints.

Future At Work designed a learning-first hackathon model, where the hackathon was not the starting point, but the proof point.

AI Learning Sprint

of Applied Learning

AI Innovation Hackathon

Business Problems

Working Together in Teams

Hackathons fail when people are asked to build before they’re ready. This model fixes that

Phase 1

AI Learning Sprint (32 Hours)

This workshop is designed as a launchpad,

Participants went through a tightly structured learning journey designed for enterprise reality.

How it worked:

- One session per week

- 4 hours per session

- Hands-on work in every session

- Weekly mini-projects and demos

What made it different:

- No passive attendance

- No deferred application

- Learning validated through visible output

By the end of the sprint:

- Technical teams accelerated their workflows

- Non-technical teams gained functional AI fluency

- Confidence gaps across roles narrowed significantly

- Participants started spotting automation opportunities in their daily tasks.

- Redundant, manual tasks were eliminated through practical AI workflows.

- Met organizational standards, allowing real use beyond the hackathon.

Phase 2

The AI Innovation Hackathon (12 Hours)

The learning sprint culminated in a high-pressure, high-energy hackathon.

Design principles:

- Real DPL Problems Only

- Mixed tech and non-tech teams

- Clear Time Constraints

- Leadership Presence

- Public Demos

This was the moment learning became undeniable proof.

Design a HackathonThis wasn’t experimentation for show. These were solutions built under real constraints.

Measurable Impact

DPL benchmarked this model against its traditional Growth Plan.

What the Hackathon and Learning Model Produced

The outcomes at DPL went beyond winning teams or final demos. They showed up across capability, behavior, and operations.

Business-Relevant AI Solutions

These results validated the model at a system level, not just an individual one.

One outcome fundamentally changed how AI capability was perceived internally:

The second position holder was a non-technical participant, working entirely solo.

- No engineering background

- No development team

- No prior coding experience

What enabled this:

- AI fundamentals from the learning sprint

- Effective prompting and validation

- Clear MVP framing

- Confidence built through structured, applied learning

This reframed AI from a role-based skill into a capability multiplier.

Not all impacts appeared on the leaderboard.

One of the most telling outcomes came from DPL’s accounting function.

During the AI learning sprint, a non-technical accounting team member:

- Automated repetitive manual steps in their own workflow

- Applied AI tools and simple automations independently

- Reduced personal effort without relying on engineering support

It demonstrated that:

- AI learning translated into immediate productivity gains

- Capability extended beyond innovation teams

- Employees began solving their own operational problems

For leadership, this highlighted the ROI conversion from future potential to present-day value.

In parallel, DPL’s Organizational Development team achieved a critical outcome.

- The OD team built a functioning, ship-ready employee growth and management system

- Strategy moved into deployable execution

- AI-enabled workflows supported real OD operations

This proved the model strengthens core organizational infrastructure.

The program naturally surfaced skills without mandate or pressure.

A live, credible talent signal for leadership.

When a non-technical employee can compete at this level, the organization’s ceiling changes

Why This Model Works?

Three design decisions made this scalable:

The result, FOMO without

mandates.

Capability before competition.

Inclusion by

design

Non-tech teams were empowered, not accommodated.

Visibility over

compliance

Output was public. Progress was real.

What This Meant for DPL

- A visibly AI-capable workforce

- Real prototypes tied to business needs

- Immediate operational efficiencies

- Clear insight into emerging talent

- A repeatable internal playbook

- A cultural shift toward experimentation

This was not a successful hackathon but a successful enterprise learning transformation.

Ready to Create Outcomes Like This in Your Organization?

Book an Enterprise Hackathon Strategy Session